From the perspective of Windows, a disk is a device that provides addressable long-term storage for blocks of data, which are accessed using file system drivers. In other words, each byte on the disk does not have its own address, but each block does have an address. These blocks are known as sectors and are the basic unit of storage and transfer to and from the device (in other words, all transfers must be a multiple of the sector size). Whether the device is implemented using rotating magnetic media (hard disk or floppy disk) or solid state memory (flash disk or thumb drive) is irrelevant.

Windows supports a wide variety of interconnect mechanisms for attaching a disk to a system, including SCSI, SAS (Serial Attached SCSI), SATA (Serial Advanced Technology Attachment), USB, SD/MMC, and iSCSI.

The typical disk drive (often referred to as a hard disk) is built using one or more rigid rotating platters covered in a magnetic material. An arm containing a head moves back and forth across the surface of the platter reading and writing bits that are stored magnetically.

While the disk interconnect mechanisms have been evolving since IBM introduced hard disks in 1956 and have become faster and more intelligent, the underlying disk format has changed very little, except for annual increases in areal density (the number of bits per square inch). Since the inception of disk drives, the data portion of a disk sector has typically been 512 bytes.

Disk storage areal density has increased from 2,000 bits per square inch in 1956 to over 650 billion bits per square inch in 2011, with most of that gain coming in the last 15 years. Disk manufacturers are reaching the physical limits of current magnetic disk technology, so they are changing the format of the disks: increasing the sector size from 512 bytes to 4,096 bytes, and changing the size of the error correcting code (ECC) from 50 bytes to 100 bytes. This new disk format is known as the advanced format. The size of the advanced format sector was chosen because it matches the x86 page size and the NTFS cluster size. The advanced format provides about 10 percent greater capacity by reducing the amount of overhead per sector (everything except the data area is overhead) and through better error correcting capabilities. (A single 100-byte ECC is better than eight 50-byte ECCs). The downside to advanced format disks is potentially wasted space for small files, but as you’ll see in Chapter 12, NTFS has a mechanism for efficiently storing small files.

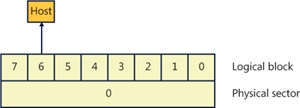

Advanced format disks provide an emulation mechanism (known as 512e) for legacy operating systems that understand only 512-byte sectors. With 512e, the host does not know that the disk supports 4,096-byte sectors; it continues to read and write 512-byte sectors (called logical blocks). The disk’s controller will translate a logical block number into the correct physical sector. For example, if the host issues a read request for logical block number 6, then the disk controller will read physical sector number 0 into its internal buffer and return only the 512-byte portion corresponding to logical block 6 to the host, as shown in Figure 9-1.

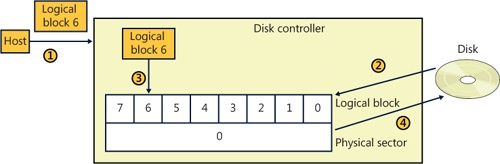

Writes are a little more complicated in that they require the disk’s controller to perform a read-modify-write operation, as shown in Figure 9-2.

The host writes logical block 6 to the controller.

The controller maps logical block 6 to physical sector 0 and reads the entire sector into the controller’s memory.

The controller copies logical block 6 into its position within the copy of the physical sector in the controller’s memory.

The controller writes the 4,096-byte physical sector from memory back to the disk.

Obviously, there is a performance penalty associated with using 512e, but advanced format disks will still work with legacy operating systems.

Windows supports native 4,096-byte advance format sectors, so there is no additional read-modify-write overhead. As you will see in Chapter 12, NTFS was written to support sectors of more than 512 bytes and by default issues disk I/Os using a 4,096-byte cluster. The Windows cache manager (see Chapter 11) will attempt to reduce the penalty of applications assuming 512-byte sectors; however, applications should be upgraded to query the size of a disk’s sectors (by issuing an IOCTL_STORAGE_QUERY_PROPERTY I/O request and examining the returned BytesPerPhysicalSector value) and not assume 512-byte sectors when performing sector I/O. It is very important that partitioning tools understand the size of a disk’s physical sectors and align partitions to physical sector boundaries because partitions must be an integral number of physical sectors.

Recently, the cost of manufacturing flash memory has decreased to the point where manufacturers are building storage subsystems with a disk-type interface, calling the device a solid state disk (SSD) or flash disk. As far as Windows is concerned, an SSD is a disk, but there are some important differences between a rotating disk and an SSD that Windows has to support. Before getting into the details of how Windows supports SSDs, let’s look at how an SSD is implemented.

Flash memory in some respects is very similar to a computer’s RAM (random access memory), except that flash memory does not lose its contents when the power is removed, which means that flash memory is nonvolatile. The most common types of flash memory are NOR and NAND. NOR flash memory is operationally the closest to RAM in that each byte is individually addressable, while NAND flash memory is organized into blocks, like a disk. Typically, NOR-type flash memory is used to hold the BIOS on your computer’s motherboard, and NAND-type flash memory is used in SSDs.

The most important difference between flash memory and RAM is that RAM can be read and written an almost infinite number of times, while flash memory can be overwritten something less than 100,000 times. (Depending on the type of flash memory, it may be as few as 1,000 times). In effect, flash memory wears out, so flash memory should be treated more like media with a limited lifetime (such as a floppy disk) than RAM or a magnetic disk. Another major difference between flash memory and RAM is that flash memory cannot be updated in place; a block must be erased before it can be written (even for NOR-type flash memory). Flash memory is significantly faster than magnetic disks (usually by a factor of 100,000, or so; access time: 50 nanoseconds versus 5 milliseconds), but it is slower than RAM (usually by a factor of 50). From a practical perspective, memory access time is not the whole story because flash memory is not on the system memory bus. Instead, it sits behind a disk-type controller interface on an I/O bus, so in reality the difference between flash and magnetic disks may be on the order of only 1,000 times faster, and in some workloads a rotating magnetic disk can outperform a low-end SSD.

NAND-type flash memory is most commonly used in SSDs, so that is what we will examine in detail. NAND-type flash comes in two types:

Single-level cell (SLC) stores 1 bit per internal cell, has a higher number of program/erase cycles (on the order of 100,000), and is significantly faster than multilevel cell (MLC), but it is much more expensive than MLC.

Multilevel cell (MLC) stores multiple bits per internal cell and is significantly cheaper than SLC. MLC needs more ECC bits than SLC, has fewer erase cycles (~5,000), and consumes more power than SLC.

NAND-type flash is typically organized into 4,096-byte pages (which may be exposed as eight 512-byte sectors or a single 4,096-byte sector), which are the smallest readable or writable units, and the pages are grouped into blocks of 64 to 1,024 pages, with thousands of blocks per chip. As with a magnetic disk, there is overhead on each page, with ECC, page health, and spare bits. The block is the smallest erasable unit, so to change a single sector within a page requires that the entire block be erased and then rewritten. (Flash cells can be written only after they have been erased.) This means that writing a sector to an empty block is very fast, but if there is not an available empty block, the controller has to perform the following actions:

Notice that what started as a write to a sector (512 bytes) became a write of an entire block. For this example, if we assume 128 pages in a block and a completely full block, then the write would take 1,023 times longer (the block contains 1,024 sectors) than the write of a single sector to an empty block. This example is a worst case and is decidedly not the norm, but it illustrates an important aspect of SSDs: as more and more of the SSD’s memory is consumed, it will have to rewrite substantially more data than a single sector. In effect, SSDs slow down as they fill up. This has important implications that are addressed in the next section, File Deletion and the Trim Command.

As a block wears out, eventually it will fail to erase. Also, the more a block is erased and rewritten, the slower it becomes (a result of the physics behind how flash memory is implemented). This means that an SSD will only get slower as you use it—even on an empty block. For example, on a 1-GB USB MLC flash disk with 128 pages per block (giving us 2,048 blocks), erasing and writing one block per second would wear out all the blocks in 23.7 days (assuming a maximum of 1,000 erase cycles per block, which is typical for the cheaper flash disks). Erasing and writing the same block once per second will wear out that block in only 16.6 minutes! SSDs typically have spare blocks held in reserve (often 20 percent of the SSD’s capacity) so that if a block wears out, the data is moved to a spare block. Clearly, flash memory cannot be used the same way as RAM or a magnetic disk.

The flash memory controller implements a technique called wear-leveling to spread the wear (erases) across the SSD. Wear-leveling depends on the fact that most of the data that you write to a disk is static; that is, it does not change often (it is usually read frequently, but that doesn’t cause wear). Of course, there is also dynamic data (such as log files) that changes frequently. There are many different types of wear-leveling algorithms, but describing them is beyond the scope of this book. The important concept to understand about wear-leveling is that the controller will move data around within the flash memory in an attempt to spread writes across all the flash memory, thus prolonging the overall life of the SSD. An implication of wear-leveling is that more blocks are subjected to more frequent program/erase cycles in an attempt to extend the overall life of the flash memory, but when the drive fails (as they all do), then more blocks will fail at the same time. Keep in mind that the SSD industry is moving toward the point where SSDs will advertise their health more explicitly, and at the point of impending write failure they will become read-only drives.

The file system keeps track of which areas of a disk are currently in use for each file, and when a file is deleted it does not zero all the areas on the disk that contained the file—if it did, then deleting a large file would take longer than deleting a small file, and file undelete utilities would not work. Instead, the file system driver will mark those areas of the disk as available in its data structures (usually referred to as metadata; see Chapter 12 for more information). This is not a problem for magnetic disks because they read and write sectors natively, but SSDs do not read and write sectors natively (recall that the size of the writable unit, the page, is much smaller than the size of the erasable unit, the block).

SSDs have to manage the contents of pages and blocks when updating a sector. This becomes a huge problem because the SSD does not know that the contents of a page are free unless it has been erased. The SSD would continue to preserve “deleted” data when updating a sector or during wear-leveling, reducing the amount of free space available to the SSD controller. The end result would be that the speed of the SSD would degrade up to the point at which all sectors have been accessed (at least once), and the only way to speed it up again would be to erase the entire drive. This is exactly the behavior that existed in early SSDs.

The solution to this problem was the introduction of the trim command to the SSD’s controller. The file system detects that the SSD supports the trim command by sending the I/O request IOCTL_STORAGE_QUERY_PROPERTY with the property ID StorageDeviceTrimProperty down the storage stack (covered later in this chapter). When a file is deleted or truncated on a disk that supports the trim command, the file system sends the list of sectors that the file occupied to the disk driver, using the I/O request IOCTL_STORAGE_MANAGE_DATA_SET_ATTRIBUTES with the action parameter DeviceDsmAction_Trim. When the disk driver receives this I/O request, it sends a trim command to the SSD, notifying the SSD that those sectors are now free and may be erased and repurposed at the SSD’s convenience. This lets the SSD reclaim those sectors during an update or wear-leveling operation, thereby improving the performance of the SSD. Note that the trim command cannot be queued internally within the SSD’s controller and executes synchronously, which may manifest as a noticeable pause when a large file is being deleted.

While Windows does support SSDs, Microsoft recommends that they be backed up frequently if they are being used for important data. A standard disk defragmenter should never be used on an SSD because it will wear out the flash very quickly. The Windows defragmenter will not attempt to defragment an SSD. (Defragmenting an SSD isn’t generally useful because file fragmentation does not slow down access to a file on an SSD in the same way that it does on a magnetic disk.) As we’ll see in Chapter 12, NTFS was not designed with short-lived (flash memory) disks in mind, and it frequently issues lots of small writes to its transaction log, which is important for increasing reliability but causes additional wear to the flash memory. Using an SSD as your C: drive may drastically increase the speed of your system, but understand that the SSD will wear out before a magnetic disk would.